Read Time: 14mins

Read Time: 14mins  Published: 29/04/2024 | Updated: 12/03/2026

Published: 29/04/2024 | Updated: 12/03/2026 Cryptocurrency at Glance

- Decentralized Networks: Instead of a central server, cryptocurrencies run on a blockchain. This is a distributed ledger that’s maintained by thousands of independent computers, also known as nodes, which ensure that no one can control or alter the system.

- Transaction Verification: Networks utilize consensus mechanisms, such as Proof of Stake (PoS) or Proof of Work (PoW), to confirm the transactions. Then, validators check that the sender has sufficient funds and a valid digital signature before adding the data to a permanent block.

- Network Fees: You’ll need to pay fees, which are also called gas, so validators can process your transactions. However, these costs vary by network traffic.

- Buying and Storing in Wallets: Usually, you should buy crypto from regulated exchanges like Coinbase or Binance. But to enhance your security, you should store cryptocurrencies in private wallets (hardware or software), which you can access only with your unique private key (seed phrase).

- Volatility: Crypto prices are driven by shifts in investor sentiment, institutional adoption, macroeconomic news, and regulatory frameworks. Due to this, even the smallest changes can cause significant price fluctuations for cryptocurrencies.

What Is Cryptocurrency?

Crypto refers to digital money secured by cryptography and blockchain, operating without central banks. Nowadays, cryptocurrency has a lot of uses, with the most common ones being transfers, investing, and even online services like betting and gambling. To understand what crypto is better, here’s a brief summary:

- Digital, not physical

- Peer-to-peer value transfer

- Secured by cryptography

- Recorded on a blockchain

However, you shouldn’t mix up crypto with blockchain. In fact, owning cryptocurrency does not mean owning or investing in blockchain technology itself. If you’ve ever asked yourselves: “What is blockchain?” remember that it’s the whole infrastructure, and crypto is one application.

Transactions, Confirmations & Fees

When you send cryptocurrency, the transaction enters a waiting area before it’s bundled into a block. The confirmation is actually the number of blocks added to the chain after yours. What’s crucial is that more confirmations signal much greater finality and security.

Another important thing is that you need to pay gas fees to prioritize your transaction, which acts as a tip to the network’s validators. Additionally, the speed and cost of this whole process vary significantly between networks, due to their architectural trade-offs.

That said, older and more congested networks, such as Bitcoin and Ethereum, often have higher fees and slower speeds because of their limited throughput. In comparison, newer Layer-2 solutions or high-performance chains like Solana, utilize advanced scaling techniques. This allows them to process thousands of transactions per second for a fraction of a dollar.

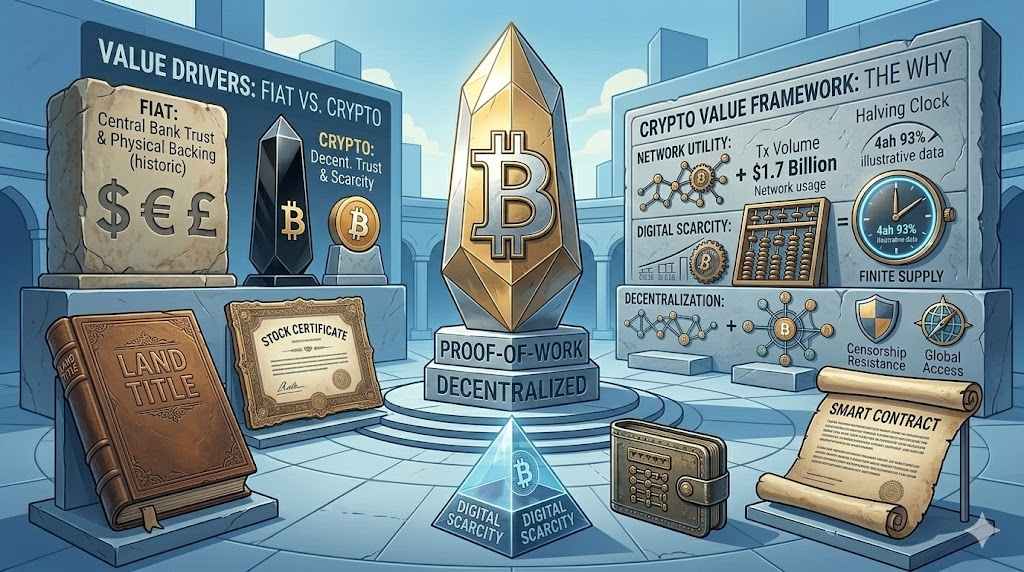

Cryptocurrency vs Traditional Money

Cryptocurrency and traditional money (fiat currencies) are two fundamentally different approaches to value. On one hand, fiat relies on legal authority and stability of central governments. On the other hand, cryptocurrency is built on math-based protocols and decentralized networks.

You can notice the core differences through four aspects, including:

- Decentralization: Traditional money are centralized, with supply and interest rates managed by central banks like the Federal Reserve. Compared to them, cryptocurrencies are decentralized, which means they reduce any intermediaries and are governed by a global network of computers.

- Reversibility: As traditional payment methods focus on protecting customers, banks can often reverse incorrect charges or wire transfers. Unfortunately, crypto transactions are irreversible. This means that if you send funds to the wrong address, you can’t recover them unless the recipient returns them.

- Custody: Another big difference is the custody. Banks usually hold traditional money, whereas cryptocurrency enables self-custody. In other words, you’re responsible for your own private keys, providing you with total autonomy. Yet, the security is also in your hands. This means that if you lose your keys, you also lose access to your assets.

- Inflation Control: Ultimately, fiat supply can be expanded to manage economic crises. However, this leads to devaluation over time. On the other hand, cryptocurrencies have a fixed supply, which creates scarcity that acts as a hedge against inflation.

Now, let’s sum up all of this in a brief comparison table:

| Feature | Cryptocurrency | Traditional Fiat Money |

| Decentralization | High (distributed global network) | Low (managed by central banks) |

| Reversibility | Irreversible | Possible via bank intervention |

| Custody | Self-custody | Third-Party (bank holds funds) |

| Inflation Control | Fixed supply | Supply adjusted by policy |

Why Does Cryptocurrency Have Value?

Cryptocurrency’s value arises from the supply and demand, scarcity, utility, adoption, and market sentiment.

- Supply and Demand: Like any other asset, crypto prices are driven by supply and demand. If more people want to buy a coin than sell it, then the demand exceeds the supply and the value increases. But when demand falls, prices decline. The good thing about cryptocurrencies is that most of them have transparent issuance schedules, meaning investors know how much new supply will enter the market.

- Scarcity: Scarcity plays a crucial role in crypto’s value. More precisely, a limited supply of crypto coins can increase the perceived value. Plus, this concept strengthens the “digital gold” narrative of cryptocurrencies.

- Utility: Cryptocurrencies also gain value from their real-world functionality. Some enable fast and borderless payments, while others power dApps, smart contracts, DeFi, and platforms. Regardless of this, if the blockchain solves real problems, like reducing transaction costs, without an issue, then the native token will benefit from utility adoption

- Adoption: Speaking of adoption, the more people, businesses, and institutions use a cryptocurrency, the stronger its network effect. Simply put, broader adoption can increase liquidity, usability, and trust. All of this contributes to the long-term growth and relevance of cryptocurrencies.

- Market Sentiment: Media coverage, regulation, macro trends, and investor confidence shape cryptocurrency demand. Because the market is still evolving, sentiment can change quickly, and long-term crypto price predictions often reflect these shifts.

Mining, Validation & Network Security

If you’re wondering: “How do crypto transactions work?” you should know that cryptocurrency relies on decentralized systems to validate transactions and keep the ledger secure. This process is handled by consensus mechanisms, such as the Proof of Work and Proof of Stake. However, it’s important to remember that you don’t need to mine to use crypto.

The original consensus model is the Proof of Work, used by the Bitcoin cryptocurrency. In PoW systems, miners compete to solve complex mathematical puzzles using computing power. The first who solves the puzzle can add a new block of transactions to the blockchain.

Due to this, the network is secure because compromising it would require a lot of resources and electricity, making fraud extremely expensive.

On the other hand, Proof of Stake replaces the energy-intensive mining with a staking model. Ethereum is one of the networks that utilizes PoS. Instead of computing power, validators lock up or stake their cryptocurrency as collateral to validate transactions. Validators are rewarded for honest behavior and can lose part of their stake for any fraudulent activity.

However, the things that both systems have in common are achieving decentralization and transparency.

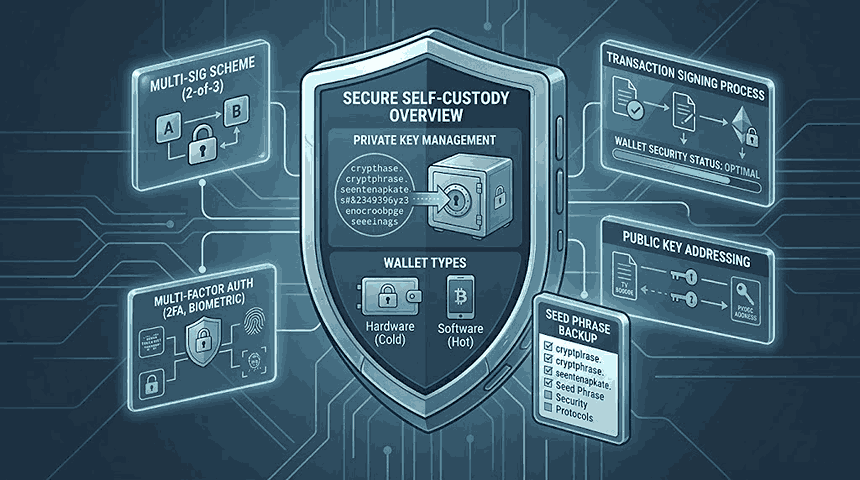

Crypto Wallets & Self-Custody (Security Basics)

In the section below, you’ll learn more about “Is cryptocurrency safe?” through understanding the basics of crypto wallets. While crypto runs on secure blockchain technology, protecting your funds comes down to how you manage your crypto wallet and private keys, as well as using sports betting tools and resources to keep funds safe while gambling or betting online.

Custodial vs Non-Custodial Wallets

When it comes to custodial vs non-custodial wallets, the biggest security difference is who controls your private keys.

On the one hand, there are custodial wallets that exchanges like Coinbase and Binance provide. More precisely, when you store crypto on an exchange, the platform controls your private keys on your behalf.

This comes with a high level of convenience, as you don’t need to worry about password recovery or customer support, and you can enjoy easy trading. Still, you need to trust a third party. And if the exchange is hacked or freezes withdrawals, your access will be limited.

On the other hand, non-custodial or self-custody wallets give you full control over your private keys. In this case, no intermediary can freeze or manage your funds. While this increases independence, you’re entirely responsible for your security. Plus, there’s no password recovery option.

Hot Wallets vs Cold Wallets

Wallets are also categorized based on whether they’re connected to the internet.

With this in mind, a hot wallet is always online, which is convenient for short-term, everyday use, like trading, sending payments, or interacting with apps. This also makes them faster and easier to access.

However, this also brings a lot of risks, including exposure to cyber threats like hacking or phishing.

Compared to the hot wallets, a cold wallet option is offline. They also come with hardware components and physical backups, which is why they’re safer for long-term storage of cryptocurrencies.

Here’s a brief breakdown:

| Feature | Hot Wallet | Cold Wallet |

|---|---|---|

| Internet Connection | Online | Offline |

| Security Level | Moderate | High |

| Best For | Frequent transactions | Long-term storage |

Private Keys & Seed Phrases

Your private key is a cryptographic code that proves you’re the owner of the cryptocurrency. Simply put, whoever controls the private key controls the funds.

In addition, crypto wallets generate a seed phrase or a recovery phrase. Usually, it’s 12 or 24 random words, which is the readable backup of your private keys. Why this phrase is important is that if your device is lost, damaged, or stolen, you can restore your access to the funds using it.

Yet, if you lose your seed phrase and can’t access your wallet, your funds are lost for good. There’s no central authority that can help you reset or recover it. Plus, if someone else gains access to your recovery phrase, they can easily transfer crypto.

How to Buy, Store & Send Crypto (Beginner Path)

Getting started with cryptocurrency can be overwhelming at first. But once you learn the steps, you shouldn’t have any issues. Here’s a simple overview of the main steps you should follow.

Choose Exchange/On-Ramp

The first step is deciding where to buy crypto, and the biggest decision here is choosing between crypto exchanges (CEXs) and decentralized exchanges (DEXs).

Most beginners start with a centralized exchange (CEX), as this acts as an intermediary that lets you deposit traditional currencies and purchase crypto without any issues. These platforms usually manage the security, account recovery, and custody for you.

In comparison, a decentralized exchange (DEX) lets you trade from your own wallet without relying on a central authority. While DEX exchanges provide greater control and privacy, they require prior crypto ownership and knowledge of wallet management.

Thus, starting with a CEX can be the most straightforward and accessible option for newbies.

Buy Crypto or Stablecoins

The next step is to purchase cryptocurrency. Many beginners choose major coins, like Bitcoin and Ethereum, but there are also others who prefer starting with stablecoins (USDT, USDC).

More precisely, stablecoins are cryptocurrencies that have a stable value because they’re pegged to the US dollar. Due to their steady price, they’re used as a bridge between traditional money and volatile crypto assets.

Optional Wallet Transfer

Once you become a crypto holder, you can either keep it on the exchange or move it to a personal wallet. Although this is optional, you should remember that transferring crypto to your wallet is great if you prioritize long-term security and self-custody.

Send Test Transaction

Last but not least, make sure you send small test transactions before sending a large amount of crypto. This will confirm that the wallet address and network are correct, and if the test transfer arrives successfully, you can proceed with larger transactions.

Risks, Scams & Safety Checklist

Understanding the potential crypto scams and risks is a must, especially for beginners. So, let’s explore them further to be more familiar with them.

Volatility of Crypto Prices

As we already mentioned, crypto prices are highly volatile. In fact, the prices can fluctuate hourly or even within minutes, depending on the market conditions and any other changes in the main drivers. While rapid gains are possible, so are sharp declines.

Lack of Recoverability

The lack of recoverability is one of cryptocurrency’s core aspects. Once you send funds to the wrong address, you can recover them. This is because there’s no central authority that will reset your access or reverse the payment. That’s why it’s so important to double-check your transaction details and keep your seed phrase and private keys securely.

Phishing & Fake Support

Phishing is one of the most common scams in crypto. More precisely, malicious actors can create fake websites, emails, or social media profiles that mimic legitimate crypto platforms. They may act as support staff, wallet provider, etc.

Keep in mind that no legitimate support team will ask for your private key or seed phrase. So, if someone requests this information, it’s automatically a red flag.

Wallet Access Approvals & Scams

If you use dApps or participate in DeFi, you’ll need to approve wallet permissions. This also enables smart contracts for certain tokens.

However, fraudulent contracts can request unlimited access and steal all your funds. Therefore, you need to keep an eye on this and make sure you’re not granting permission to suspicious links.

Common Beginner Mistakes

Some of the most common beginner mistakes include:

- Sending crypto on the wrong network

- Copying wallet addresses incorrectly

- Storing seed phrases online without protection

- Falling for suspicious crypto schemes that guarantee big profits

- Investing more than you can afford to lose

So, now that you’re aware of the possible scams, here are a few things you should do to stay safe:

- Don’t share your private key or seed phrase with anyone.

- Write down your recovery phrase and store it offline.

- Enable two-factor authentication on the exchange platform you’re using.

- Always double-check wallet addresses before sending funds.

- Send a small test transaction before large transfers.

- Verify website URLs to avoid phishing attacks.

- Don’t click on links from suspicious senders.

- Review wallet approvals and revoke unnecessary access.

- Don’t accept high-rewarding offers from any site.

- Invest money you can afford to lose.

Regulations & Responsible Use

Cryptocurrency regulations vary by country. This means that some governments have clear legal frameworks for digital assets, while others impose rigid restrictions. For instance, the US regulates crypto through multiple agencies and evolving compliance rules. However, the EU has a broad regulatory framework that standardizes the oversight across member states.

These differences are crucial to how exchanges operate, how taxes are applied, and what protection you have. In many countries, centralized platforms implement anti-money laundering (AML) and know-your-customer (KYC) requirements. This means that you’ll need to verify your identity before trading.

Some view this as reducing privacy, but such a measure only adds legitimacy and consumer protection to the industry.

Another crucial thing is compliance. Due to this, you should use licensed platforms, understand local tax obligations, and follow all legal updates to make an informed investing decision. More precisely, cryptocurrency transactions may be subject to capital gains tax, reporting requirements, or specific trading restrictions. It all depends on your location.

When choosing an exchange or wallet provider, transparency is essential. Our How We Rate & Review methodology explains the criteria we use to assess licensing, security standards, fee transparency, fund protection mechanisms, and responsible-use policies.

Secure usage is also a must. Even in regulated markets, you need to protect your private keys, avoid scams, and practice safe storage habits for your seed phrase.

Basically, you need to learn how to responsibly use crypto, just as our Responsible Gambling Guide shows how to act when gambling.

Key Crypto Terms You Should Know

- Blockchain: A distributed digital ledger that records transactions across a network of computers securely and transparently.

- Wallet: A digital tool (software or hardware) that stores private keys and allows you to send, receive, and manage crypto.

- Private key: A secret cryptographic code that proves ownership of your crypto.

- Seed phrase: A 12-24 word recovery phrase that restores access to your wallet if your device is lost or damaged.

- Wallet address: A string of characters you can share to receive cryptocurrency.

- Stablecoins: Cryptocurrencies that have a stable value because they’re pegged to the US dollar.

- Gas fees: Transaction fees you need to pay to process and validate the transaction on a blockchain network.

- Mining: The process of validating transactions in Proof of Work systems using powerful computers.

- Staking: The process of locking crypto to help secure a Proof of Stake network and validate transactions.

- Market cap: The total value of a cryptocurrency, calculated by multiplying the price by the circulating supply.

- Crypto exchange: A digital marketplace or platform that enables buying, selling, and trading of digital assets.

- Smart contract: A self-executing code on a blockchain that runs actions when pre-defined conditions are met.

- DeFi: Blockchain-based financial system without traditional intermediaries for peer-to-peer lending, borrowing, and trading using smart contracts.

FAQ – Crypto Basics

How does cryptocurrency work?

Cryptocurrency works as a decentralized digital currency that’s secured by cryptography and doesn’t rely on any intermediaries. It operates on blockchain technology to record, verify, and store all transactions across a network of computers.

Is crypto safe for beginners?

Crypto is not very safe for beginners because of its high volatility, lack of regulation, and security risks like hacks or scams. Although it can offer high returns, you need to research first and invest as much as you can afford to lose.

What is a crypto wallet?

A crypto wallet is a digital tool that stores your private keys and lets you send, receive, and manage cryptocurrencies.

What are stablecoins?

Stablecoins are cryptocurrencies pegged to the US dollar. Due to this, they maintain steady values.

What is blockchain in simple terms?

Blockchain is a distributed digital ledger that records transactions across a network of computers.

Do I need a wallet to use cryptocurrency?

Yes, you need a wallet to use cryptocurrency, especially if you prioritize your safety. The choice of wallet depends only on your needs and preferences.

Fact checked by

Fact checked by

josip.putarek@bitedge.com

josip.putarek@bitedge.com